A Joint Transfer Convolutional processor

in Integrated Photnics

Current electronic paradigms to perform cognitive tasks such as feature extraction with convolutional neural networks are hitting the limitations established by the end of the Moore’s law for energy consumption and speed.

Furthermore the underling operations are computational intensive. In order to bypass the wire-charging limiting electronics and the slow response times of liquid-crystals, here we propose to explore a novel optical processor able to speed up Fourier-optical convolution processing by two-three orders of magnitude.

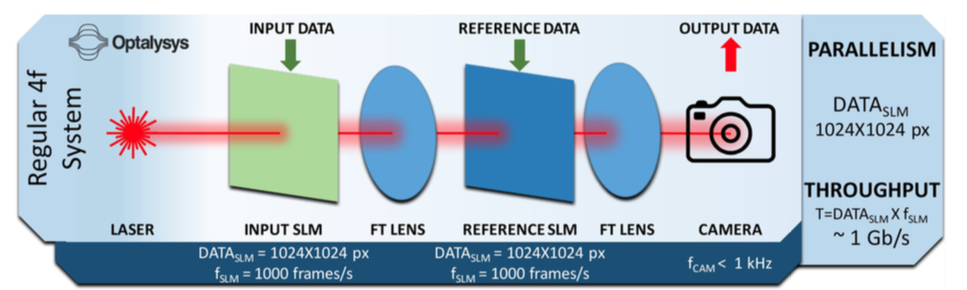

Background: The challenge with current joint transform correlators (JTC) is that they are clocking at a slow (kHz) rate using spatial light modulators (SLM) based on liquid crystal technology (LCT).

Impact: Since this JTC will be performing convolutions at ultra-high throughput, applications requiring demanding computation will benefit from it including machine learning using neural networks, scientific demanding SAR/ATR (synthetic aperture radar/automatic target recognition) application and computations, or sequencing for DNA, or RF signal and image processing such as hyper-spectral filtering - all key applications for DOD. In particular, current and future Electronic Warfare (EW) systems will benefit from the proposed optical processor, since it provides real time intelligent sensing and processing over RF signal traffic, without converting to the electronic domain. If successful, this exploratory 'study' will surpass the system of Optalysys by a factor of 100x and paves the way for a prototype demonstration in a follow-on effort.

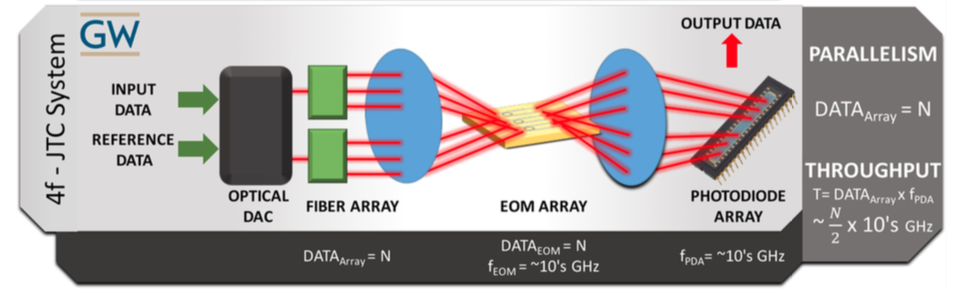

Methods/Approach: Key in our approach is to enable GHz-fast data handling; data loading front end, convolutional filtering, and back-end data registration, unlike kHz-slow SLMs. Conceptually, we are keeping the data 'longer' in the optical domain and avoid electro-optic conversion where able, or speed it up using integrated photonics with 10's GHz fast devices readily available from silicon photonic foundries such as AIM.

Furthermore the underling operations are computational intensive. In order to bypass the wire-charging limiting electronics and the slow response times of liquid-crystals, here we propose to explore a novel optical processor able to speed up Fourier-optical convolution processing by two-three orders of magnitude.

Background: The challenge with current joint transform correlators (JTC) is that they are clocking at a slow (kHz) rate using spatial light modulators (SLM) based on liquid crystal technology (LCT).

Impact: Since this JTC will be performing convolutions at ultra-high throughput, applications requiring demanding computation will benefit from it including machine learning using neural networks, scientific demanding SAR/ATR (synthetic aperture radar/automatic target recognition) application and computations, or sequencing for DNA, or RF signal and image processing such as hyper-spectral filtering - all key applications for DOD. In particular, current and future Electronic Warfare (EW) systems will benefit from the proposed optical processor, since it provides real time intelligent sensing and processing over RF signal traffic, without converting to the electronic domain. If successful, this exploratory 'study' will surpass the system of Optalysys by a factor of 100x and paves the way for a prototype demonstration in a follow-on effort.

Methods/Approach: Key in our approach is to enable GHz-fast data handling; data loading front end, convolutional filtering, and back-end data registration, unlike kHz-slow SLMs. Conceptually, we are keeping the data 'longer' in the optical domain and avoid electro-optic conversion where able, or speed it up using integrated photonics with 10's GHz fast devices readily available from silicon photonic foundries such as AIM.

Free-space (GEN-1) and Integrated Photonics (GEN-2)

prototypes

GEN-1System: The free space 4f convolution system uses low power laser light and digital micromirror devices (DMDs) to perform convolution functions in parallel at high-speed and resolutions, more efficiently compared to the energy used by current CMOS based technology. It could be employed as base unit in CNN, for making intelligent decision, classifications, pattern recognition, in big data challenging problems.

Data I/O: The interface between a PC or sensing platform and the optical system is designed in order to transfer input data to the Digital Micromirror Devices (DMDs) at high speed. The high-speed interface will be implemented using either FPGA (100Gbps (x 128 links) 8 Tbps), which format and route the input data and signatures to the DMDs. Data modulation: System is based on 2 stages of Fourier Transform (FT). A pattern is formed by shining laser light to an electronically configured Digital Micro-Mirror Device (DLM 6500), which thanks to the over 2 million programmable micromirrors modulates the laser intensity, coding analog numerical data with a resolution of 8 bit and a speed of 1031Hz (~20kHz with 1bit resolution). At the Fourier plane, the light pattern convolves with a second image, also using a DMD and subsequently transformed in the real space through a second Fourier lens and detected by a CCD camera. Read-out: The light resulting from the convolution between signature and input data is collected in parallel by a high-speed (1kHz) camera with a resolution of 8 bit. The read out could potentially being used as nonlinear activation function, since the signal detected is proportional to the squared norm of the electric field. Expected performances: The system leverages on the high parallelism, which enables up 275 Pbit/s computation speed. This system can perform Fourier transform calculations 10 times faster than a Nvidia P6000 (100GPixel/s) graphics card (12 TFlops with a Max Power consumption of 250 W), using approximately just 20 percent of the power. The throughput of the system is given by the resolution of the DMDs (1920x1080 at 8 bit), their update speed (~1kHz) and the CCD camera acquisition frame rate (1kHz) |

GEN-2System: In Gen-2 we aim to replace the slow SLMs or DMDs (Gen-1) with an optical DAC front-end and an Electro Optic Re-programmable Array (EORA) integrated photonic chip in the Fourier plane. The DAC modulates the input signal and the reference in the image plane at 10GHz speed, while the EORA is responsible for the dot product in the Fourier domain, also operating at tens of GHz.

Expected performances: The latency of the optical JTC can be defined as the time between the first bit of input data arriving at optical modulator and the detected signal arriving at the electronic interface to the computer. Similarly, the throughput can be defined as the operating frequency of the JTC times the number of kernel elements. In the case of the 1D electro-optic JTC, this is a sum of the latency in the optical DAC (or modulator at read in), optical delay lines, electro-optic SOI remodulating array, the light time-of-flight, and final optical detector. If we assume a modulation rate of 10 GHz, available from a single channel of a COTS QSFP+ fiber module, we have 0.1 ns for modulation. The required delay will be the number of elements in the kernel times the modulation latency. If we choose a small kernel size of 8, this becomes a delay of 0.1ns. Each element of the SOI array is a combination of a high-speed photodiode and an optical modulator. Modern integrated photonic receivers and modulators operate in excess of 20 GHz, conservatively allocating 0.1 ns of latency for the re-modulator and another 0.1 ns for the correlation detection photodiode array brings the total latency minus light time to 1.1 ns. The light time is that light takes to travel twice the length of the longest ray. For a lens with a 15 mm diameter 10 mm focal length this is 0.19 ns, bringing our total latency to 1.29 ns. Since the signal input to the system is serial, throughput is the modulation rate divided by the number of elements, in this case 10GHz/8 = 1.25GHz. This speed is bound at the input by limiting our input modulator to what is currently available as COTS with a single modulator, and the components available in the AIM foundry for fabricating the re-modulator array. Adding parallel non-COTS modulators could easily bring the operating rate beyond 10 GHz. Research-grade modulators have been demonstrated in excess of 170 GHz. The 2D nonlinear optical film JTC has similar performance the electro-optic JTC in a single layer, as the operating frequency is bound by the read-in modulator and read-out photodiode. However, the advantage of the nonlinear film JTC becomes apparent with the introduction of multiple layers, as used in deep convolutional neural networks. With a nonlinear film, the signal no longer needs to be converted back to an electrical signal at each intermediate layer. Each additional layer added between the read-in/out electro-optics does not add to the system latency as long as the cumulative delay is less than the operating frequency of the electro-optics. |

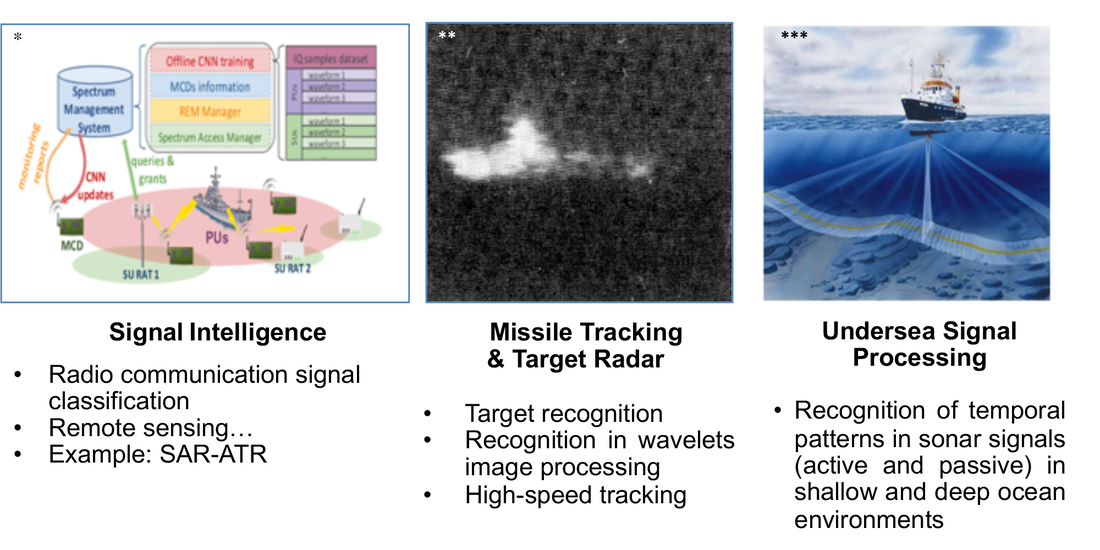

DOD Applications

Naval relevance: Gen-1 has vast computational parallelism (up to 1980×1020 px) provided by the DMDs spatial resolution and 8bit data resolution, although comparatively lower speed (~few kHz), therefore applications which demand handling large amount of data, without converting them into the electronic domain or without allocating memory, are the most suitable. For instance, on the fly image filtering and image processing for performing neural network tasks such as super-resolution in off-line trained CNN, are only few of these tasks. On the other hand, Gen-2 is particularly indicated for speeding up processes up to real time (~0.1 ns) that requires convolutions of limited amount of data (limited only by the few hundreds input fibers).

Gen-2 can be used for correlating multiple incoming signals travelling through fibers with some unknown delay, such as found in radar and RF signal processing, in order to continuously monitor and instantaneously track harmful treats on a large portion of the spectrum in Electronic Warfare tasks. The convoluted signals could also provide a potential mean for encryption or decoying by using RF temporarily variant analog signals for conveying cyphered or misleading information. Gen-2 could potentially perform tasks in CNN such as signal intelligence and classification, in which both training (update of the “weights” of the EORA) and inference (run-time) need to happen in a nanosecond time scale. Moreover, considering that the data are conveyed through fibers and the almost negligible latency associated, this kind of computing can happen closer to the edge of the network allowing to analyze important data in near real-time, instead of sending it across long routes to data centers or cloud.

Gen-2 can be used for correlating multiple incoming signals travelling through fibers with some unknown delay, such as found in radar and RF signal processing, in order to continuously monitor and instantaneously track harmful treats on a large portion of the spectrum in Electronic Warfare tasks. The convoluted signals could also provide a potential mean for encryption or decoying by using RF temporarily variant analog signals for conveying cyphered or misleading information. Gen-2 could potentially perform tasks in CNN such as signal intelligence and classification, in which both training (update of the “weights” of the EORA) and inference (run-time) need to happen in a nanosecond time scale. Moreover, considering that the data are conveyed through fibers and the almost negligible latency associated, this kind of computing can happen closer to the edge of the network allowing to analyze important data in near real-time, instead of sending it across long routes to data centers or cloud.

Copyright 2019 - George Washington University